19 Tech Pioneers You’ve Probably Never Heard Of

History has a bad habit of remembering the wrong people. You know Bill Gates, Steve Jobs, and Mark Zuckerberg.

But the people who actually built the foundations those names stood on? Most of them never made the covers of magazines.

Some never got so much as a footnote. These are the engineers, mathematicians, and tinkerers whose work quietly shaped the digital world you use every day — and whose names deserve to be spoken out loud.

Hedy Lamarr — The Actress Who Helped Invent Wi-Fi

Before there was Wi-Fi, Bluetooth, or GPS, there was Hedy Lamarr — Hollywood actress and, quietly, one of the most important inventors of the 20th century. During World War II, she co-developed a frequency-hopping communication system designed to prevent enemy forces from jamming torpedo guidance signals.

The U.S. Navy didn’t adopt it until decades later, but the underlying principle became the backbone of spread-spectrum technology. Every time you connect to a wireless network, you’re benefiting from her work.

She received no royalties. The patent had expired by the time anyone paid attention.

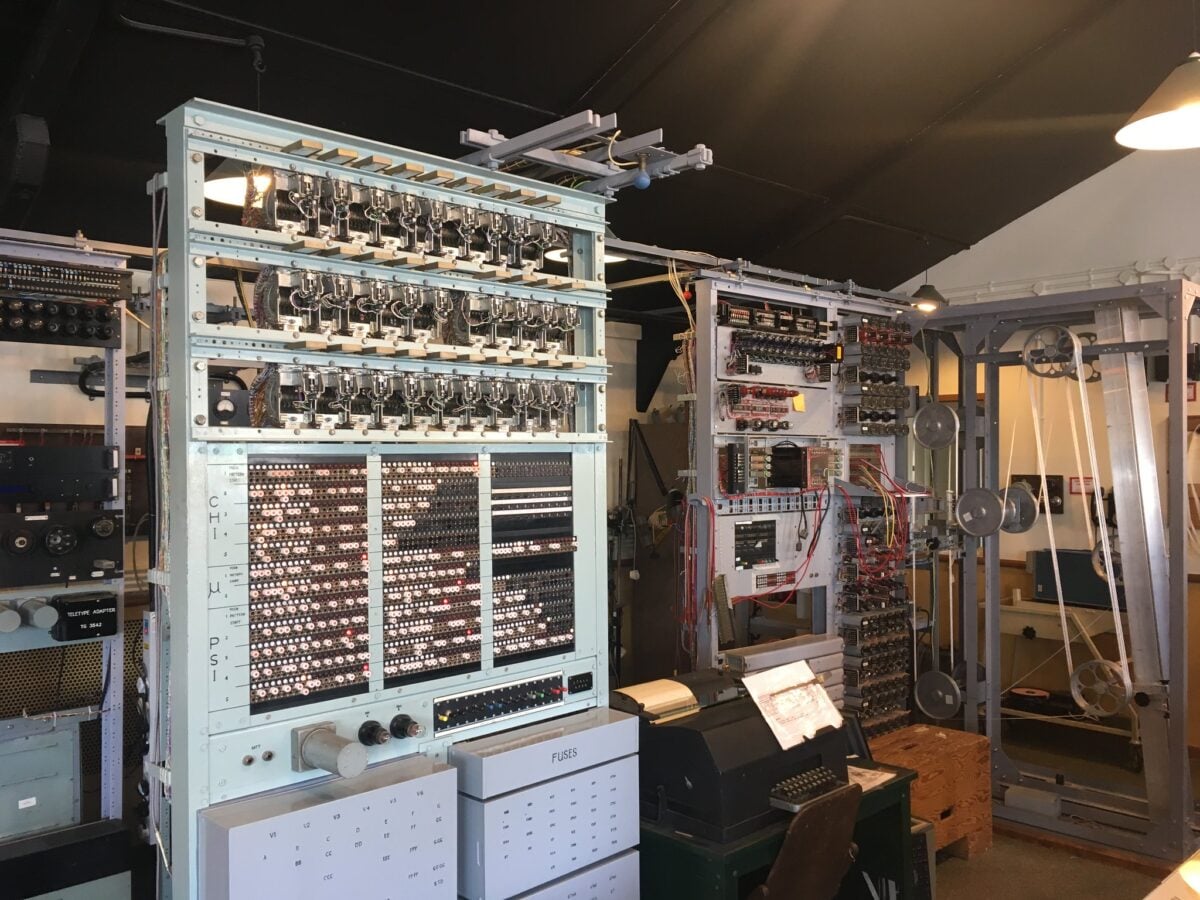

Tommy Flowers — The Man Who Built the First Programmable Computer

Most people credit ENIAC as the first programmable electronic computer. But Tommy Flowers, a British engineer working at Bletchley Park during World War II, built Colossus years earlier — a machine designed to crack Nazi encryption.

It worked. The problem was that its existence stayed classified for decades after the war, long enough for history to move on without him.

Flowers died in 1998, recognized only late in his life for a contribution that changed the course of the war and the history of computing.

Radia Perlman — The Engineer Whose Protocol Holds the Internet Together

Radia Perlman invented the Spanning Tree Protocol (STP) in 1985, an algorithm that allows network bridges to communicate without creating loops that would otherwise bring everything crashing down. The internet as you know it would be functionally impossible without it.

She’s also done significant work in network security and has written one of the most widely used networking textbooks in computer science programs worldwide. She tends to push back on being called “the mother of the internet,” saying she finds the label reductive.

Fair enough. The work speaks for itself.

Fernando Corbató — The Inventor of the Password

The computer password was Fernando Corbató’s idea. In the early 1960s, he was working on the Compatible Time-Sharing System (CTSS) at MIT, a system that allowed multiple users to share a single mainframe.

Since each user had their own files, they needed a way to keep things private. He created passwords as a quick, practical solution.

In a 2014 interview, he admitted the whole thing had become “kind of a nightmare.” Dozens of passwords for dozens of systems, impossible to remember, often reused.

He saw the problem clearly. He just never got the chance to fix it.

Elizabeth “Jake” Feinler — The Person Who Ran the Early Internet’s Address Book

Before there were domain registrars, before there was ICANN, before .com existed as a concept, Elizabeth Feinler ran the Network Information Center at SRI International. Her team maintained the host tables that mapped computer names to addresses on ARPANET — essentially the internet’s first phone book.

She and her team also developed the domain naming conventions that led directly to .com, .edu, .gov, and .org. The next time you type a URL into your browser, you’re using a system she helped create.

Gary Kildall — The Man Who Almost Sold DOS to IBM

In 1980, IBM needed an operating system for its new personal computer. It came to Gary Kildall first.

Kildall had already built CP/M, the most widely used operating system for microcomputers at the time. The meeting, famously, didn’t go well — accounts differ on exactly why.

IBM ended up going to Bill Gates instead, who licensed 86-DOS and rebranded it as MS-DOS. CP/M faded.

MS-DOS took over. Kildall’s place in history got reduced to a footnote about the meeting that didn’t happen.

He died in 1994, largely forgotten outside of computer history circles.

Paul Baran — The Man Who Invented Packet Switching

Every piece of data you send across the internet — every message, every video, every photo — travels in packets. Small chunks of information that get sent separately and reassembled at the destination.

Paul Baran developed this concept in the early 1960s while working at RAND Corporation, originally as a way to build communication networks that could survive a nuclear attack. His proposal was largely ignored for years.

When ARPANET was eventually built using his ideas, Baran wasn’t even invited to the project. He found out later that his work had been used without anyone telling him.

Sister Mary Kenneth Keller — The First Woman to Earn a Computer Science PhD

In 1965, Sister Mary Kenneth Keller became the first woman in the United States to earn a doctoral degree in computer science. She helped develop BASIC at Dartmouth — the programming language that would go on to make computers accessible to millions of non-specialists — and spent her career pushing for computer access in education long before most institutions took the idea seriously.

She believed computers would change how people learn. She was right.

Most histories of BASIC barely mention her name.

Sophie Wilson — The Architect of the Chip in Your Pocket

Sophie Wilson designed the instruction set for the ARM processor while working at Acorn Computers in the early 1980s. ARM chips, known for their energy efficiency, now power the vast majority of the world’s smartphones, tablets, and embedded devices.

The processor in your phone almost certainly runs on an architecture she helped create. Wilson also designed the BBC Micro, a computer that introduced a generation of British schoolchildren to programming.

In the UK, her influence on tech literacy is hard to overstate. Elsewhere, she’s barely known at all.

Adele Goldberg — The Researcher Who Showed Apple What a Personal Computer Could Be

When Apple’s engineers visited Xerox PARC in 1979, they saw the graphical user interface, the mouse, and overlapping windows — ideas that would shape the Macintosh. Adele Goldberg, a computer scientist at PARC, was one of the people in that room.

She’d spent years developing Smalltalk, the object-oriented programming language that underpinned much of what Apple saw that day. She was also reportedly one of the few people at PARC who warned colleagues that letting Apple’s engineers in was probably a mistake.

She was right. Apple took those ideas and built a company worth trillions.

Goldberg’s name rarely comes up in the story.

John Backus — The Programmer Who Made Programming Human

Before John Backus, writing software meant speaking directly to the machine — tedious, painstaking, and deeply unforgiving. In 1957, Backus led the team at IBM that created FORTRAN, the first high-level programming language.

It let engineers and scientists write code that looked something like ordinary math, rather than rows of binary instructions. He later developed Backus-Naur Form (BNF), a notation used to describe the grammar of programming languages that’s still in use today.

Nearly every programming language that followed — Python, Java, C, all of them — owes something to what Backus built.

Ray Tomlinson — The Engineer Who Chose the @ Symbol

In 1971, Ray Tomlinson sent the first network email between two computers sitting side by side in the same room. More importantly, he chose the @ symbol to separate a user’s name from their host machine — a decision so practical and so lasting that it’s now recognized by UNESCO as a cultural symbol of the digital age.

Tomlinson later said he couldn’t remember what the first message said. It was probably just a test.

He died in 2016. You’ve been using his punctuation decision every day of your digital life.

Corrinne Yu — The Engineer Who Made Games Run Faster

Corrinne Yu spent years as one of the most respected game engine programmers in the industry, working on titles like Halo and Halo 2. Her work on optimizing physics simulations and graphics pipelines pushed what was possible on console hardware at the time.

She later moved to Naughty Dog and then to Google. In an industry that produces a lot of visible stars, she was always the person making the machinery work better — recognized inside the industry, largely invisible outside it.

Claude Shannon — The Father of Information Theory

Claude Shannon published “A Mathematical Theory of Communication” in 1948 and, in doing so, created an entirely new field. His work established that information could be quantified, measured, and transmitted efficiently — and laid the mathematical groundwork for everything from data compression to cryptography to digital storage.

The bit, the fundamental unit of digital information, comes directly from his framework. Shannon was known for being eccentric and brilliant in equal measure.

He rode a unicycle through the halls of Bell Labs and juggled while thinking. He died in 2001, and while information theory specialists revere him, his name never became a household word.

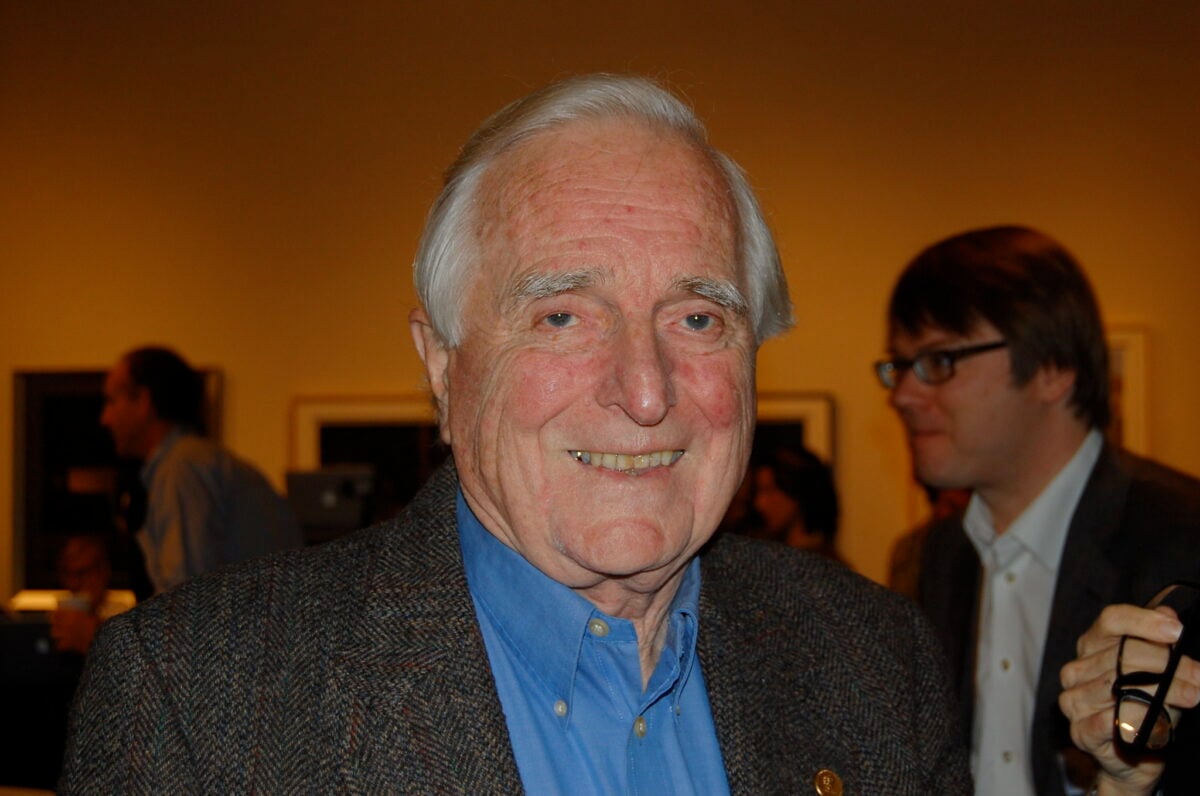

Douglas Engelbart — The Inventor Who Showed the Future

In 1968, Douglas Engelbart gave a live demonstration that introduced the world to the computer mouse, video conferencing, hypertext, collaborative real-time editing, and the graphical user interface — all in one 90-minute presentation. It’s now called “The Mother of All Demos.”

Almost everything he showed that day took another two decades to become standard. Engelbart spent much of the rest of his career trying to get people to take his bigger vision seriously: that computers should primarily be tools for augmenting human intelligence.

He never quite got the recognition the demo deserved while he was still working.

Nathaniel Rochester — The Man Behind the First Commercial Scientific Computer

Nathaniel Rochester led the team at IBM that designed the IBM 701, the company’s first commercial scientific computer, released in 1952. He also wrote the first assembler — a program that translates human-readable code into machine instructions.

And in 1956, he co-organized the Dartmouth Conference, the event where the term “artificial intelligence” was formally coined. He’s connected to more landmark moments in tech history than almost anyone, and almost nobody knows his name.

Stephanie “Steve” Shirley — The Entrepreneur Who Pioneered Remote Work

In 1962, Stephanie Shirley founded a software company in the UK called Freelance Programmers, hiring women who had been pushed out of the workforce after marriage or motherhood. She built a distributed team of programmers working from home decades before that was considered a real business model.

Her company eventually grew to employ thousands and was valued at hundreds of millions of pounds. She used the name “Steve” in business correspondence because letters signed with a woman’s name were too often ignored.

Her company built the black box software for the Concorde supersonic aircraft. She later became one of the most generous philanthropists in British history.

John McCarthy — The Man Who Named Artificial Intelligence

John McCarthy coined the term “artificial intelligence” in 1955. He invented the programming language LISP, which became the standard for AI research for decades and is still used today.

He developed the concept of time-sharing in computing, allowing multiple users to share a single machine. He predicted that computers would eventually be able to reason — and spent his career trying to make that happen.

He lived long enough to see AI become one of the biggest topics in the world. He died in 2011, just as the field was starting to reach the kind of scale he’d imagined.

Whether that would have pleased him or frustrated him is anyone’s guess.

Alan Kay — The Visionary Who Designed the Computer You’re Using Right Now

Alan Kay imagined the personal computer before most people knew what a computer was. At Xerox PARC in the 1970s, he developed Smalltalk, one of the first object-oriented programming languages, and conceptualized the Dynabook — a portable, personal computing device that looks, in retrospect, almost exactly like a modern tablet.

He helped design the graphical interfaces that Apple and Microsoft later adopted. He’s also the person who said “the best way to predict the future is to invent it.”

He meant it literally. He spent his career inventing it.

The laptop on your desk, the interface you navigate with a mouse, the way programs are structured — Kay’s fingerprints are on all of it.

The Names That Got Left Behind

History tends to compress. It picks a few faces, a few names, and builds the story around them.

That’s convenient, but it’s never fully true. The digital world didn’t come from a handful of visionaries working alone.

It came from thousands of people solving specific problems, often without recognition, often without credit, often without knowing the full scale of what they were building. The pioneers on this list didn’t all want fame.

Some actively avoided it. But knowing their names — and what they actually did — gives you a more honest picture of where the technology around you came from.

And maybe a healthier sense of how progress actually works: slowly, collectively, and usually in rooms where no cameras are rolling.

More from Go2Tutors!

- The Romanov Crown Jewels and Their Tragic Fate

- 13 Historical Mysteries That Science Still Can’t Solve

- Famous Hoaxes That Fooled the World for Years

- 15 Child Stars with Tragic Adult Lives

- 16 Famous Jewelry Pieces in History

Like Go2Tutors’s content? Follow us on MSN.