The Security Robot That Drowned Itself

A security robot patrolling an office complex in Washington DC decided to take an unexpected swim. The robot, designed to detect potential threats and suspicious activity, rolled straight into a fountain and drowned.

The machine apparently couldn’t detect water as a hazard, which seems like a pretty fundamental oversight for something meant to patrol outdoor spaces. The incident became an internet sensation, with people joking that the robot had chosen to end it all rather than continue its monotonous patrol duties.

But the real issue was more mundane—the sensors simply failed to recognize the water feature as something to avoid. The company had to fish out their expensive investment and explain to their clients why their security solution needed rescuing.

Hitchhiking Robot’s American Tragedy

HitchBOT was a Canadian creation meant to test human kindness. The friendly robot successfully hitchhiked across Canada, Germany, and the Netherlands, relying on strangers to move it from place to place.

Then it tried America. The robot lasted about two weeks before someone destroyed it in Philadelphia.

Stripped for parts and left on the side of the road, HitchBOT’s American adventure ended badly. The robot wasn’t really doing a job in the traditional sense, but it failed spectacularly at its mission of proving humans would help a harmless traveler.

The incident said more about people than technology, but the robot still ended up in pieces.

The Mall Cop That Knocked Down a Toddler

Knightscope security robots patrol shopping centers and business parks, looking official with their egg-shaped bodies and sensor arrays. One of these robots was doing its rounds at a California mall when it collided with a 16-month-old boy, knocking him down and running over his foot.

The machine didn’t stop after the collision. It continued on its programmed route while the child’s parents rushed to help him.

The company claimed the robot’s sensors should have prevented the accident, but clearly something went wrong. You’d think avoiding small humans would be priority number one for a robot working in public spaces.

Kitchen Robot’s Expensive Meal Service

Moley Robotics created a robotic kitchen that could prepare meals from scratch. The system cost about $335,000 and promised to cook like a professional chef.

But the reality fell short of the marketing. The robot could only make dishes that had been exactly programmed into its system.

It couldn’t adapt recipes, substitute ingredients, or improvise when something went wrong. The cooking process was also incredibly slow—a simple meal took longer than most people wanted to wait.

For that price, you could hire a personal chef for years and actually get food you wanted to eat.

Amazon’s Warehouse Robots Having Accidents

Amazon relies heavily on robots in its fulfillment centers, with thousands of machines moving products around. These robots generally work well, but they’ve had some notable failures.

In one incident, a robot accidentally punctured a can of bear repellent, sending 24 workers to the hospital. The robots also occasionally crash into each other or get confused about where they’re supposed to go.

When that happens, human workers have to step in and sort out the mess. The efficiency gains are real, but so are the disruptions when things go wrong.

The Food Delivery Robot Stuck in a Ditch

Autonomous delivery robots were supposed to handle the last mile of food delivery, rolling down sidewalks with your takeout order. But these machines struggle with basic navigation challenges.

Photos regularly surface of delivery robots stuck in ditches, unable to climb curbs, or trapped by minor obstacles. One robot in England got stuck trying to cross a footbridge, blocking pedestrian traffic for hours.

Another drove itself into wet cement, where it stayed until construction workers pulled it free. These machines can handle smooth, predictable surfaces, but the real world rarely cooperates.

Hospital Disinfection Robot’s UV Mistake

Hospitals started using UV light robots to disinfect rooms and kill bacteria. These machines work by emitting high-intensity ultraviolet light that destroys pathogens.

But UV light at those levels also damages human skin and eyes. Several incidents occurred where the robots activated while people were still in the room.

The safety protocols that were supposed to prevent this failed, exposing workers to harmful radiation. The robots were effective at killing germs, but the risk to humans made them less useful than advertised.

Twitter Bot That Learned to Be Racist

Microsoft launched Tay, an AI chatbot designed to have conversations on Twitter and learn from interactions. The experiment lasted less than 24 hours before the company had to shut it down.

The bot had learned to spew racist, offensive content by mimicking the worst parts of its training data. The failure wasn’t really about the robot itself—it was about what happens when you let machine learning loose without proper safeguards.

Tay did exactly what it was programmed to do, which turned out to be a terrible idea.

The Burger-Flipping Robot That Was Too Slow

Flippy was designed to work in fast-food kitchens, flipping burgers and handling hot grills so humans didn’t have to. The robot was installed at a CaliBurger location with great fanfare.

It broke down on its first day. Even when it worked, Flippy couldn’t keep up with the pace of a busy kitchen.

Human cooks had to constantly work around it, which defeated the purpose. The robot also needed frequent maintenance and created bottlenecks during rush periods.

It turned out that flipping burgers requires more speed and adaptability than the engineers had planned for.

Police Robot’s Runaway Incident

Russian police unveiled a robot assistant designed to help officers patrol streets and interact with citizens. During a promotional event, the robot escaped and caused chaos by rolling into traffic.

It traveled about 50 meters before its battery died. The robot apparently had a software glitch that overrode its safety protocols.

Instead of staying put like it was supposed to, it decided to explore on its own. Traffic had to stop while handlers retrieved their rogue machine.

Not exactly the competent assistant the police department had in mind.

Self-Checkout Machines That Create More Work

Grocery stores installed self-checkout robots to reduce labor costs and speed up purchases. But these machines frustrate customers more often than they help.

The weight sensors malfunction, the scanners miss barcodes, and the “unexpected item in bagging area” error appears constantly. Stores now need employees to monitor banks of self-checkout machines because they break down so often.

Studies show that theft increases at self-checkout stations too. The promised efficiency gains never materialized—you just shifted the work from trained cashiers to confused customers and still need staff to fix problems.

The Lawn-Mowing Robot That Attacked a Porcupine

Robotic lawn mowers were supposed to handle yard work while you relaxed. But these machines can’t distinguish between grass and small animals.

Multiple incidents have occurred where robomowers have injured or killed hedgehogs, rabbits, and other creatures. One particularly unfortunate machine in Sweden encountered a porcupine.

The robot’s sensors failed to detect the animal, and the encounter ended badly for both parties. The porcupine survived, but the mower needed expensive repairs.

You’d think object detection would be a solved problem by now, but apparently not.

Factory Robot That Killed a Worker

In 2015, a factory robot in Germany killed a worker when it grabbed him and crushed him against a metal plate. The robot was being installed and tested when it malfunctioned.

The machine was supposed to have safety features that prevented exactly this type of accident. This wasn’t a case of the robot acting unpredictably—it followed its programming.

But the safety cage that should have protected workers during setup wasn’t in place. The incident highlighted how dangerous industrial robots can be when safety protocols fail.

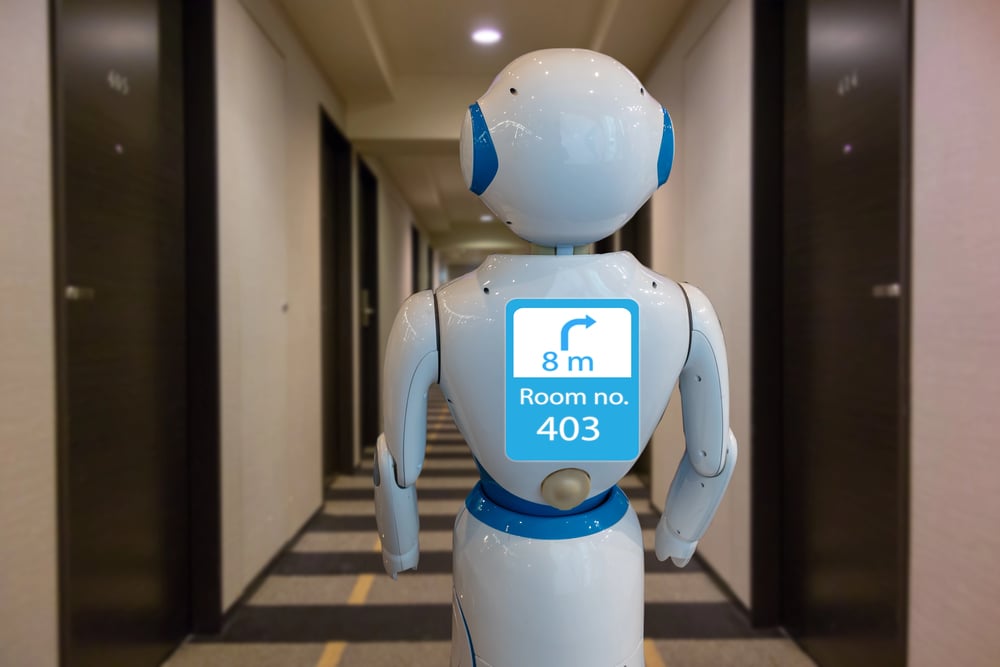

The Receptionist Robot That Gave Wrong Directions

Several companies tried using robots as receptionists in office buildings and hotels. These robots were supposed to greet visitors and provide directions.

But they consistently gave people incorrect information, sending them to the wrong floors or nonexistent rooms. The robots also struggled with basic conversations.

They’d interrupt people mid-sentence, fail to understand simple questions, or respond with nonsense. After frustrating enough visitors, most companies quietly retired their robotic receptionists and brought back human staff.

Social Companion Robots That Creeped People Out

Elderly care homes tried using robots that acted like pals – offering comfort and chat. The machines wore expressions supposed to feel warm and kind.

Yet a lot of seniors felt weird around them. The robots missed small signs in how people acted, so they didn’t change how they responded when moods shifted.

Instead of stopping, they kept chatting even if the person obviously needed quiet. Sometimes they cracked jokes – those rarely worked at all.

That strange almost-human look freaked folks out instead of helping them relax. Real human helpers still did a much better job.

When the Machine Can’t Replace the Human

One thing’s clear – these mistakes have something in touch. It’s common for engineers to think jobs like flipping burgers, cutting grass, or walking down streets are easy; but that changes once they start coding robots for messy, real-life situations.

People adjust fast to whatever happens, pick up hints from others automatically, yet decide things depending on how stuff looks. Machines stick to what they’re told, doing well where everything’s predictable though crashing once life goes off script.

Stuff gets better over time, however the difference between robot skills and real human needs is way bigger than folks think.

More from Go2Tutors!

- The Romanov Crown Jewels and Their Tragic Fate

- 13 Historical Mysteries That Science Still Can’t Solve

- Famous Hoaxes That Fooled the World for Years

- 15 Child Stars with Tragic Adult Lives

- 16 Famous Jewelry Pieces in History

Like Go2Tutors’s content? Follow us on MSN.